“…imagine that a mad scientist has developed a means of controlling the human brain at a distance… Would there be even the slightest temptation to impute freedom to her? No. But this mad scientist is nothing more than causal determinism personified…we cannot help but let our notions of freedom and responsibility travel up the puppet’s strings to the hand that controls them.”

–Sam Harris, The Moral Landscape

When data scientists employ machine learning (ML), are there moral implications that arise from using them? Certainly, the ethics of creating a self-aware machine have been discussed by others at length. But what about more simplistic models that are regularly implemented, perhaps a model attempting to prevent users from changing cellular providers? Can we use classical philosophical concepts, such as free will vs. determinism, as a means for identifying ethical issues across the emerging machine learning field?

We can begin to answer these questions by returning to the introductory quote by Sam Harris. Harris is suggesting that we consider a thought experiment regarding determinism. He argues that we are all at the mercy of biological states and neurological impulses which we are not conscious of. Harris wants us to consider one person, in complete control of another. But is complete control a necessity for determinism to supersede free will? Suppose the mad scientist had the ability to control one behavior, but it worked only 25% of the time? These instances are still as deterministic as those that occur when the scientist is in complete control, only the frequency and success rate has changed, not the underlying concept.

Assume that it doesn’t matter whom the scientist controls, only that by initiating some action, the scientist can expect the same behavior to happen at the same rate. For example, the scientist puts 100 people in a room, pushes a button, and 25 people touch their nose. He pushes the button again, and 25 people touch their nose. Whether or not the same people touch their nose each time the button is pressed is immaterial, so long as pushing the button leads to 25 people touching their nose.

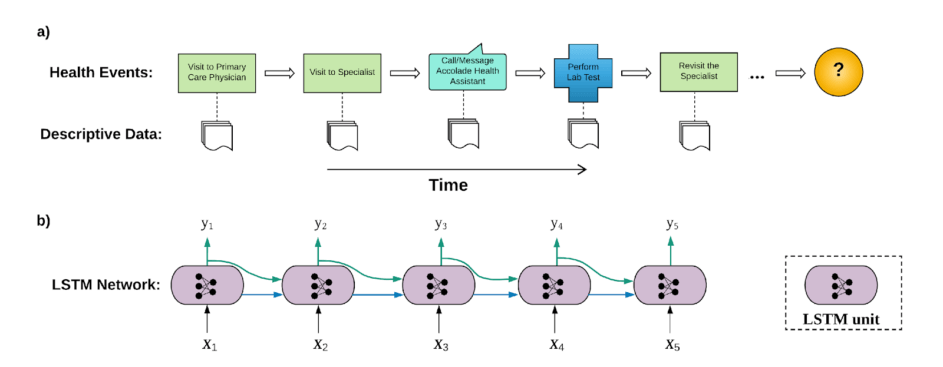

This last scenario may resemble nothing more than an ML informed A|B test. In a traditional A|B test, groups A and B are assumed to be similar and the intervention they receive is different. With an ML informed A|B test, we may assume that the intervention is the same, but the groups are different, leading to varying success rates between groups A and B.

A successful marketing model, perhaps one based on a xgboosted analysis of user’s photo sharing behavior, will have a success rate of x + y, where y is greater than 0. One may consider this value γ to be a quantifiable measure of determinism: (i) a sample of people susceptible to a given intervention has been identified by a model, (ii) the intervention has been applied, and (iii) a percentage of the sample– greater than what we be expected if our sample was identified by random chance—is affected by the intervention. The intervention has applied deterministic forces upon a set of people, who engaged in a behavior they would not otherwise have done, absent an intervention that was informed by a ML model. The implementation of a ML algorithm has determined that a set of people are susceptible to behavior modification, identifies them, and sets forth an external pressure to elicit said modification.

If certain implementations of ML algorithms can resemble deterministic behavior, then one needs to consider the question of whether it is ever appropriate for machine learning to be used in a deterministic fashion? If not, can we define clear guidelines as to how ML should be applied by posing these questions: (i) what defines determinism in the context of Machine learning? (ii) under what circumstances is it appropriate? (iii) should there be constraints based on the type of data used and how it is gathered? and (iv) how does one identify and account for bias in a ML model (all ML models will reflect the assumptions and tendencies of the data scientist who coded the model )?, To answer these questions, a better understanding of determinism and its relationship to free will needs to be discussed.

Defining Ethical Determinism in Four Paragraphs

The definition of “determinism” is one that represent the opposite of free will. A “choice” is made because of prior events, those events happened because of prior causes of their own and so forth. A person makes a choice not because he chooses to on his own volition, but because external forces and stimuli compel him to make a specific choice. The philosophical relationship between determinism and free will can be broken down into three groups: (i) no choices are deterministic (libertarianism).

(ii) all choices are in fact deterministic (hard determinism); (iii) some choices are deterministic while others are not (compatibilism).

The Libertarian argument posits that all choices are based on free will. Per Emmanuel Kant, free will exists so long as an action was governed by “Reason” (though this says nothing as to whether the reasoning is correct). [1] In considering the relationship between machine learning and determinism, the Libertarian would state that as long as the person makes a reasoned choice when proffered by ML, the fact that he makes the choice the ML algorithm predicted he will make does not mean that the ML algorithm exerted any deterministic pressure.

In direct opposition to the Libertarian, the Hard Determinist argues that all actions occur because of past events. For the Hard Determinist, such as Sam Harris, there is no free will. All actions an individual take are a response to external events and internal stimuli. It would seem then that a ML algorithm that creates a choice for an individual presents no ethical quandary as A) the creation of the ML algorithm is itself pre-determined, as such; B) the ML’s imposition on an individual’s choice is just one of many external stimuli and there is no reason to suspect that it has any more influence on behavior than any other external force.

If Libertarianism and Hard Determinism represent the poles of the determinist/free will scale, Compatibilism assumes the position in between these two extremes. Some choices may occur due to deterministic stimuli, but not all. Free will exists, but not everything one does is a result of cognizant, reasoned choice. With compatibilism, one must consider the interaction between free will and determinism. Can some deterministic events be overridden by free will (the book/film Minority Report centers around this question); conversely, can free will be overridden by deterministic forces, such as a model created by extremely granulated behavior data?

Questions for Ethical Machine Learning Through the Lens of Determinist Philosophy

Given the above spheres of determinism, the prior question, is it every appropriate for machine learning to be used in a deterministic fashion, becomes significantly easier to parse. For instance, the Libertarian could state that the all ML algorithms may be appropriate as they do not compromise free will. No matter what is being proffered, individuals impacted by the algorithm have the ability to say yes or no. So long as reason dictates choice, machine learning is nothing more than a tool that cannot create an imposition on free will. [2] The Hard Determinist can equally eschew the ethical implications of ML models. If all human behavior is governed by deterministic functions, then a ML model cannot be the proximate or root cause for a given behavior, it is merely another link in an unending causal chain.

It is in the Compatibilist sphere where the ethics of ML algorithms becomes most relevant. Assume a person has enough data about another’s behavior, is possible to manipulate this behavior to achieve a desired outcome? In other words, given enough information, can determinism subsume free will? Can a ML algorithm evoke a moral choice and if so, can it incentive immoral behavior? Are the outputs of a ML algorithm amoral, or do they reflect the choices and biases of the scientist that wrote the program?

One need not create an example using Minority Report regarding predicting and interdicting criminal behavior before it happens in order to answer these questions. More benign scenarios, including ones that are already currently in use, can be used. Per our A|B testing scenario, suppose we have identified a group of people who are 25% more likely to take an offer (and to spend more money as a result) than a group that was randomly selected and one markets exclusively to them. Can this marketing campaign be considered as imposing a deterministic choice?

Assuming the answer to the above is yes, that we know enough about a group’s behavioral patterns to identify and intervene with measurable success, do the ethics of using the ML model depend on the type of intervention? For example, is an intervention that increases wealth by 15% more ethical to use than one that decreases wealth by 15%. Is the overriding of free will allowed so long as one can point to a positive outcome? Perhaps ML ethics should be considered to be utilitarian in nature, or is there another overriding philosophy that must be considered?

Should the intent (assuming it is knowable) of the person who wrote the ML algorithm be considered as to whether its use is ethical? Perhaps the ML model created a 15% increase in wealth, but the overall purpose was to increase wealth so that 10% can be stolen later. Alternatively, the data scientist wrote a ML algorithm with the intent of increasing wealth by 15% only to see the opposite effect. In other words, does the intent of the algorithm, or the intent of the creator of the algorithm, matter when considering its overall ethical position? [3]

Finally, does the means by which the ML model is created factor into whether its use is ethical? Consider a marketing model which is based solely on age, sex and area code vs. one based on text mining a person’s email history? Do the ethics change based on the granularity of data involved? Does informed consent matter? If a person is unaware that their email history was being used for data modeling (presumably the person did not read the details of the contract), is it ethical to impose deterministic forces so long as there has been prior consent?

A Summary with More Questions

It is important to understand how human behavior is impacted by the expanded use of machine learning algorithms. Most algorithms only care to optimize success and are unconcerned with deontological notions, i.e., whether the morality of an action should be based on whether that action itself is right or wrong under a series of rules, rather than based on the consequences of the action. The ML algorithm is designed to maximize success, how it goes about achieving this success is generally irrelevant. If one’s choices can be manipulated, and this manipulation can be done at scale to affect millions of people, are we not putting ourselves at the mercy of an amoral machine god and its creator? If free will exists, can it be subverted or manipulated given enough people and enough data?

There are no easy or readily apparent answers to the questions listed above. The astute reader ma note that this essay raised many questions and answered none. Unfortunately, moral philosophy is long on questions and short on answers. Yet these are questions that need to be asked whenever a ML model is employed. Data scientists need to consider and address these questions in order to implement a ML model that is ethically defensible. There is no guidebook, but there is still a need for companies and individuals to not only ask what can they do with unlimited data, but also ask what should they do.

References:

[1] Critique of Practical Reason (1788)

[2] This has nothing to say about whether the ML algorithm itself was created and used in an ethical fashion.

[3] This, perhaps, is a question best asked when considering Aristotle’s Nicomachean Ethics which t the ethics of a choice as being represented by matrix of intent (intentional/unintentional) and outcome (good outcome/bad outcome), wherein the most ethical choices are those that that have the best outcome and were intended to be to have said outcome.