Use of artificial neural networks for machine learning has enabled major advancements in intelligent systems, helping millions of people in their daily lives. During the past decade, progress has greatly accelerated thanks to the availability of massive amounts of data and use of specialized hardware to build deeper networks and perform faster optimization. Furthermore, better insight into the inner workings of deep neural networks has enabled both researchers and practitioners to achieve improvements in training and generalization (Erhan, 2010; Ioffe, 2015; Srivastava, 2014).

There are numerous environments where systems powered by artificial neural networks shape our experiences and influence our behavior. These systems routinely manifest in our experiences with e-commerce, web search, as well as in communication interfaces such as smart speakers, messaging, and email applications.

An important area where the use of machine learning is still in its infancy is population health. While deep learning has been used for medical diagnosis applications (Poplin, 2018; Cruz-Roa, 2014), building predictive models for behavior of healthcare consumers is a relatively unexplored subject. This is a potential use case that we are passionate about at Accolade.

Accolade at a Glance:

Our mission at Accolade is to provide personalized health and benefits solutions to improve the experience, outcomes, and cost of healthcare for employers, health plans, and health plan members. We provide a single point of contact for all health and benefits resources and work with employees and their families to help them utilize the best care options available. Our ability to be proactive about consumer behavior has always been crucial to our mission.

Predicting Members’ Healthcare Usage with Deep Learning

People pursue and obtain healthcare through various channels. For instance, they can visit primary care physicians or specialists, and they may receive care at clinics or hospitals and fill prescriptions at drugstores. However, while they often seek information to help in their decision-making from the internet, friends, and providers, choosing the right healthcare and using it properly has become an increasingly challenging and complex task.

In addition to these conventional methods, Accolade members can call our team of healthcare assistants or reach out to them through direct messaging. Furthermore, our technology enables informing our health assistants about changes in members’ health status that may require support and guidance. We consider all these as other forms of interaction between our members and the healthcare system.

By drawing on what we know about how our members use healthcare and related benefits, we have considered building models to predict members’ future usage patterns. This provides our team of health assistants with valuable insight to use in outreach and guidance. Such targeted interventions improve members’ health outcomes and their decision-making about using health and benefit resources, which in turn saves medical costs.

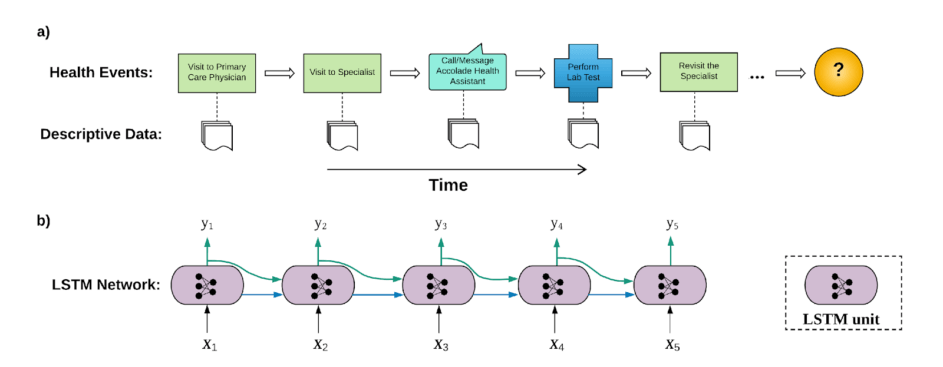

When it comes to learning from our members’ experience over time, events are not isolated from each other. Occurrence of a healthcare event can generally be traced back to a prior event. Let’s make this concrete with the following hypothetical scenario. Fig. 1a) shows a series of events that an Accolade member might experience over time.

Figure 1 a) Sequence of a member health events over time. b) An LSTM network learning from the sequence of events in a). {yi} are labels corresponding to the events whose feature vectors are {xi}.

Here, the member visited a primary care physician (event #1), who referred him/her to a specialist (event #2). For the purpose of diagnosis, the specialist then asked the member to take medical tests (event #4). However, in the meantime, the member decided to consult his/her dedicated health specialist at Accolade (event #3). The member then returned to the specialist to discuss the results (event #5). Other events may follow.

Clearly, most of these events are result of other events that happened earlier in the member’s timeline. For example, the lab visit was requested by the specialist, to whom the member was referred because he/she visited a primary care physician in the first place.

Furthermore, there is some amount of data that describe the context of each event. For example, there are diagnosis codes in specialist claims or lab visits, and procedure codes associated with operations or tests performed on members in medical facilities. Combined with member attributes (age, gender, family information, location, employer, etc.), these form comprehensive feature vectors {xi,i=1,…} describing individual members and the events they experience as they navigate through the healthcare system.

Having identified event sequences and feature vectors describing each event, we use recurrent neural networks, Fig. 1b), to learn the underlying trends in the members’ healthcare journey. This enables us to make informed predictions about what is likely to come next in the members’ interaction with us or the healthcare providers.

Recurrent Neural Networks

Recurrent neural networks (RNNs) are at the forefront of neural network models used for learning from sequential data. Examples are time series problems and natural language understanding tasks such as machine translation and speech recognition (Cho, 2014; Graves, 2013). What makes RNNs powerful in dealing with sequential data is their stateful design: RNNs have number of internal states that are updated as consecutive elements of a sequence are processed. These internal states are then used, along with current input, to predict sequences of outputs. This gives rise to a model whose individual predictions, in addition to the current observation, are influenced by sequence of prior observations.

RNNs come in different flavors that generally differ in their details of internal computational steps that connect their inputs and outputs. In our case, since sequence of member events can be quite long, we used LSTM (long short-term memory) networks that are designed to handle long-term dependencies (Colah, 2015).

This model is currently used for the following applications:

Identifying High-Cost Claimant Members

One of our mandates at Accolade is to help our customers manage the healthcare spending of their employees. Employers often incur inflated medical costs owing to employees who are heavy users, usually because they make frequent visits to healthcare providers and/or have expensive medical claims. Identifying those people enables our health assistants to engage with them early on to provide guidance, ensure they use their healthcare and benefits properly, and inform them about alternative options available to them through their health plan.

We use RNNs on sequences of our members’ historic claims to predict whether a given member is likely to become a high-cost claimant in a certain time period, for example by the end of the calendar year. The resulting model is periodically applied on existing medical claims data of individual members to give the probability for a member becoming a high-cost claimant later on in the year. This enables Accolade to identify future high-cost claimants and reach out to them before they actually incur such increased costs.

Forecasting Member Interactions

Calls and/or direct messages are another type of event making up sequences of longitudinal health data of Accolade members. These interactions are two of the primary methods of communication with our members. As described earlier, interactions with Accolade are interrelated with claim events. For example, members contact Accolade to inquire about their past or upcoming medical claims.

We train an RNN-driven model on sequences of member claims and call events, in order to predict the probability that a member will contact us in any given time period. If more members are predicted to have higher likelihood of calling Accolade, bigger call volumes can be expected. Anticipating this volume enables us to be proactive about members’ healthcare and benefit needs and plan accordingly for our own staffing requirements.

Works Cited:

- Cho, K. e. (2014). Learning Phrase Representations using RNN Encoder–Decoder for Statistical Machine Translation. EMNLP (pp. 1724-1734). Doha: Association for Computational Linguistics.

- Colah, C. (2015). 1. Retrieved from github: http://colah.github.io/posts/2015-08-Understanding-LSTMs/

- Cruz-Roa, A. e. (2014). Automatic detection of invasive ductal carcinoma in whole slide images with convolutional neural networks. SPIE Medical Imaging, 904103–904103.

- Erhan, D. e. (2010). Why Does Unsupervised Pre-training Help Deep Learning? JMLR, 625-660.

- Graves, A. a. (2013). Speech recognition with deep recurrent neural networks. International Conference on Acoustics, Speech and Signal Processing (pp. 26-31). Vancouver, BC: IEEE.

- Ioffe, S. S. (2015). Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. JMLR, 448-456.

- Poplin, R. e. (2018). Prediction of cardiovascular risk factors from retinal fundus photographs via deep learning. Nature Biomedical Engineering, 158–164.

- Srivastava, N. e. (2014). Dropout: A Simple Way to Prevent Neural Networks from Overfitting. JMLR, 1929-1958.