What Data Scientists Should Know About the State of Location Data

Introduction

The increasing availability of continuous, precise, location data will be a game changer in data science, giving rise to new analysis tools and bringing about a wave of collaboration between data scientists and product teams. It will give data scientists yet another way to influence product decisions and lead to better experiences for users. Along with that it will add to their responsibility of helping users without hurting their privacy.

You may remember being prompted by the Uber app, last year, to allow it to track your location in the background. It promised to only collect data for a small window before your ride, but you couldn’t be totally sure. The only reason cited for the request was a marginal improvement in the Uber pickup process. It sounded like a raw deal for users, but apparently, Uber had done its research and knew that many would tap accept.

In being over-eager for background location data, Uber is not alone. The Facebook app requests it to show you nearby friends. Google Maps promises the forgetful to remember where they parked their car. Even dozens of retailers such as Urban Outfitters and Bloomingdale’s, ask for the permission in exchange for pushing coupons of nearby stores. And there’s evidence that people are willing to make the trade of convenience over privacy. Perhaps users aren’t afraid to let apps see where they go because of so-called security fatigue–it’s just too much to think about; maybe they trust certain brands to use their data judiciously; or they think they have nothing to hide.

Many of the apps asking for location data have no malintent, simply using it to enable features that can’t otherwise exist. Starbucks notifies you when you’re near one of their stores; Credit card companies offer fraud protection by making sure you’re present where your credit card is being used. Perhaps most of the time, your data is used for nothing but the advertised features and never leaves your phone. Or the collection is transparently communicated and you’re given a chance to delete it, as is the case with the timeline data Google collects. There are instances, however where historical collection is thinly veiled under the guise of real-time, functional features.

As it becomes easier to put this data to use for better customer engagement, personalization, customer segmentation, ad-targeting, per-user dynamic pricing, and more, we suspect this practice will become more and more common. The trend will create an explosion of this new type of data, give data scientists more powerful tools, and along with it, more responsibility.

Google allows users to view their timeline in detail and delete data retroactively

iOS stores frequently visited locations on device and uses them for ad-targeting and other purposes.

It’s important to think about the opportunities and dangers this presents. If companies, large and small, are asking for location data, we should consider whether it can reveal sensitive information about us that we otherwise wouldn’t consent to share. What are we actually sharing and what are we getting out of it? Is it a stretch to worry about mere coordinates data being used to compromise our privacy? On the other hand, as a company, how can we use location data to build good enough features to make it a fair exchange? Finally, what are big changes coming to the space in the near future? These are questions we’d like to answer here.

Signals Hidden in the Noise

Location data in its simplest form is a continuous stream of latitude and longitude pairs, sent from users’ devices at regular intervals. It’s determined by a phone’s GPS sensor under open sky, or otherwise collected by triangulation through fingerprinting a set of nearby cell towers, wifi access points, or bluetooth-emitting devices that are known to correspond to fixed coordinates. It has properties that make it amenable to analysis: It’s structured, relatively homogenous across users, and usually reliable and not misrepresented like volunteered data can be. Of course, that doesn’t mean that extracting meaningful, human-interpretable signals from the data is easy work. Years ago, we had the opportunity of working in data science teams with access to geotagged data from millions of users, but this data was virtually unusable for many of the use-cases we dreamt up. The cost of making sense of the data was simply too high. Raw data had to be massaged to remove outliers–there can be lots of these, especially when indoors–and the data had to then be accurately clustered, and subsequently turned into actual addresses using a process called reverse geocoding. That way, a series of 50 coordinates can be translated to say, the specific path between a coffee shop and a restaurant and the duration of the dwell time at each location. On top of the difficulties in analysis, sampling the data in the first place is hard: using the GPS chip can drain a phone’s battery–a pet peeve that can lead to your app being deleted.

APIs to the Rescue

Given the daunting challenges of making sense of location data, it may seem as though we shouldn’t be worried or excited about its power in the right or wrong hands. However, new tools are beginning to change that. Throughout the past decade, talented teams have built solutions that power their own location products and are also being made available to other companies large and small.

Let’s go back in time for a minute. You may remember Google’s Latitude app, one of the first location tracking apps with a large audience. When Latitude first launched in 2009, it used a significant amount of battery power for its data collection, and suffered from terribly inaccurate readings, which it failed to correct. It meant the app would, at times, see the user jump to the middle of a body of water and back in a matter of seconds. Through the years, however, these issues were ironed out by improvements in hardware, mobile operating systems, and algorithms to better analyze the data. GPS readings and wifi triangulation became more accurate. To save on power, operating systems started caching location readings and sharing them amongst apps, and apps learned to sample location data only when necessary, for instance remaining idle if the user were stationary.

These improvements have allowed companies to utilize location data to build reliable products and roll them out to a vast number of users. For instance, Google Maps now uses this data to offer real-time and historical foot traffic data for millions of businesses. Foursquare notifies you to automatically check in after detecting the venue you’re visiting. Now, luckily for data scientists like us, these improvements are being rolled out as APIs for outside developers, as well, taking the burden of reinventing the wheel off our shoulders.

Google Maps, for instance, has for a while, provided access to its Geocoding and Places APIs, which allow developers to easily turn coordinates into addresses and business names, and vice versa. Its Roads API enables developers to turn a series of coordinates into a path by snapping them onto existing roads. The APIs are used in many popular apps.

Google’s Roads API determines a user’s driving path from a series of coordinates

A much more interesting development is the launch of Foursquare’s Pilgrim SDK last year as part of Foursquare’s pivot towards B2B products. The mobile SDK is built on top of Foursquare’s years of experience in building its own apps that rely heavily on location understanding. When integrated into an 3rd party app, the SDK gives it a chance to react each time the app user enters or exits their home or workplace–which are inferred by the SDK–or visits any of the 105M+ public places in Foursquare’s database.

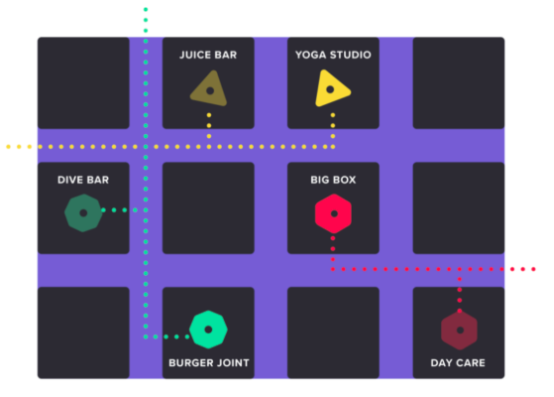

3rd party apps using the Foursquare Pilgrim SDK can use visit data to segment users. Image from Foursquare.com

New GPS Chip Redefining Accuracy

Despite these remarkable tools, there are still significant shortcomings in current hardware that make it hard to pinpoint users reliably, especially in dense urban settings and indoors. In these situations, which of course make up a large portion of a user’s day, even Foursquare and Google’s data-wrangling often don’t help. Apps may notify us to rate our experience at a Nike store while we’re actually grabbing ice-cream next-door. They most likely won’t be able to tell if we’re at a hotel bar or just checking in at the lobby nearby.

But that’s also about to change. In the coming years, we should brace ourselves for being pushed into an exciting future where those errors will virtually disappear and our apps will become extremely context-aware.

Let’s start with developments in satellite geolocation. For years, improvements in smartphone geolocation accuracy have been elusive, especially in urban settings where it’s possible for satellite signals to reflect off tall buildings and confuse our phones. Custom receivers that use ground base-stations for geolocation have been able to achieve very high accuracy numbers, but according to the government, the accuracy of GPS with current smartphone chips has remained at around 5 meters under open sky. However, in 2017, a new chip was announced by Broadcom that does much better. It uses a new radio frequency named L5, in addition to the L1 frequency that our phones currently use, allowing it to achieve an accuracy of 30cm–a 15x improvement. The chip is reported to have been included in the designs of several phones slated for release in 2018.

The additional signal also allows for significantly better accuracy in cities’ concrete canyons, due to its ability to distinguish between signals arriving directly from satellites and those bounced off of buildings around the device. And this is only the latest development in a global push for centimeter-level navigation accuracy. You can read more about the technical details of how the chip works and why using the L5 frequency helps here.

Has the Time for Beacons Finally Come?

Of course, improvements in satellite-aided navigation can only help when the user’s phone can see enough satellites in the sky, not when the device is carried indoors. The most common way for smartphones to reliably locate themselves indoors is through sensing Bluetooth Low Energy signals emitted by bluetooth beacons–inexpensive chips installed in public spaces. This is typically achieved using the so-called iBeacon protocol. The protocol was introduced by Apple in 2013 and allows for devices to constantly listen for beacons while minimally impacting battery life. The beacons repeatedly advertise a unique iBeacon identifier and the device uses them to detect the beacons in its vicinity, and by knowing their locations, approximately locate itself.

In 2013, the launch of iBeacon caused a wave of excitement and speculation about the possibilities of indoor location. Many retailers, airports, and museums, experimented with the technology, typically using it to push simple coupons or notifications to their users. Despite this initial traction, beacons never really took off as anticipated. Multiple shortcomings led to their limited adoption. Firstly, earlier beacon hardware had to be maintained after installation: their firmware had to be updated, their batteries had to be monitored and replaced, and since they didn’t connect to wifi, all of this had to be done by a person approaching each beacon phone in hand–an unreasonably costly process. They also simply didn’t offer many of the features that brought businesses real value: They could only be used if a business had convinced their customers to install their native mobile app, background tracking was limited (only a limited number of beacons could wake an application), and they had to densely cover a building to be used for navigation, which meant footing a large bill to install and maintain them.

Many of these problems have by now been solved. Several large beacon manufacturers, most notably the Polish company Estimote, have created beacons that are reasonably priced, yet reliable, and allow for remote monitoring and firmware updates through a bluetooth mesh network. They also allow for much longer replacement cycles, and precise location tracking using far fewer beacons. Their offerings drastically reduce maintenance costs and offer much anticipated features like background tracking and automatic mapping of indoor spaces.

In the past few years, there have also been signs of a decisive push for BLE beacons by Google, which has introduced its own Physical Web and EddyStone projects, and is leading the way in developing Web Bluetooth, all with the aim of allowing interaction with BLE devices without the burden of downloading an app. The new capabilities and lower costs may finally tip the balance in favor of beacons and we may see them enter businesses like hotels, airports, museums, and especially retail stores. The new capabilities might, for instance, help retailers in their fight to stay relevant: It could enable them to provide better recommendations to customers by analyzing browsing patterns in stores. They could use it to optimize product shelf placement, staff management, or to provide wayfinding solution to items in their stores.

Estimote’s iOS and Android SDKs allow for precise background monitoring for the first time.

Image from estimote.com

Show the App Where You Are

Only time will tell if iBeacon will finally deliver on its initial promise. But now, there’s an even more interesting and promising technology on the block: indoor location powered by visual signals. For a moment, let’s think about how we humans figure out where we are. Suppose you worked in a large office building for a long while. Now, imagine you were blind-folded and brought to a random location in your workplace. After looking around, you’d probably be able to tell where you are in a matter of seconds. Enter VPS. In a developer’s conference last May, Google revealed its Virtual Positioning Service, a successor to its Tango 3D project, which aims to enable devices to precisely locate themselves indoors down to a few centimeters. The tech was described as being “kind of like GPS”, but using “distinct visual features in the environment” instead of satellites. It’s likely that this type of location sensing would improve rapidly, because it can be used not only for smartphones, but also by autonomous robots or drones that want to know their exact position within the confines of concrete walls.

Google tweeted about its new VPS project during Google IO

Regardless of which technology ends up being the most useful and widely adopted, it’s clear that data scientists are seeing an explosion in the availability and usefulness of location data, and like always, it’s up to us to take advantage of it to benefit end-users while doing all we can to shield them from the danger of sacrificing their privacy.

Keshav Dhandhania is a cofounder of Compose Labs (commonlounge.com) and has spoken on GANs at international conferences including DataSciCon.Tech, Atlanta and DataHack Summit, Bangaluru, India. He did his masters in Artificial Intelligence from MIT, and his research focused on natural language processing, and before that, computer vision and recommendation systems.